Introduction

A medieval General is using messengers to communicate with his regiments, but he finds that using people to send messages is slow. After thinking, he realizes he could use drums for communication. Every regiment learns their distinct drum patterns to send reports and listen for commands. But in practice, the General finds that two regiments will often start drumming over each other and he is unable to decipher the message. This is because the regiments might start drumming at the same time, or one regiment won’t hear that the other is already drumming when they start. The potential for overlapping signals means the General won’t always understand the message, and the regiments can’t know if the General heard their message. How can the General prevent such costly communication failures?

Solutions to this type of problem are known as Medium Access Control (MAC) protocols, and are critical to the function of all modern digital communications. Instead of drums, we use radio waves, but the idea is the same. Our WiFi, cellular, and Bluetooth devices must all solve this problem. Because they operate under different constraints, they each solve it in a slightly different way.

Before we look at modern communications, let us travel back to 1969 to the islands of Hawai’i and discover how the first wireless computer network solved this issue.

ALOHA

On the island of Oʻahu, the main campus of the University of Hawai’i hosted a powerful IBM computer. Nearby community colleges wanted to connect to this powerful computer, but unfortunately these colleges sat on separate islands. Laying down a cable to create a point-to-point network, like the experimental ARPAnet of the time, would have been expensive. So, they decided to use this as the perfect excuse to research implementing a wireless computer network.

Thus was born the ALOHAnet.

The goal was fairly simple: enable all the islands to communicate with the IBM machine at the same time. This meant a radio on every island, each trying to communicate with the base station.

One big issue presented itself: If two islands tried to communicate with the base station at the same time, their radio signals would overlap and become indecipherable, exactly the issue our General struggled with when listening to multiple regiments at once.

This is where we introduce the first MAC protocol, today known as Pure ALOHA.

Take a moment to try and solve the problem yourself. How could the regiments minimize collisions, or at least make sure their message was heard by the General? Later we will see how WiFi solves this issue using a single communication channel, but ALOHA had it easier since it used two frequencies for its protocol. In our army metaphor this can be represented as being able to use both drums and horns, where different instruments can each communicate without interfering with the other instruments.

Below is the description for Pure ALOHA, the first-ever, random-access MAC protocol for a wireless computer network.

Pure ALOHA

When you have a message to send, immediately send it. This may collide with someone else, so you need to get back an acknowledgement from the base station (the General) confirming they got your message. We might worry that the acknowledgement message could collide with another message being sent at the same time, but ALOHA uses two frequencies: one for sending messages to the base station, and the other for sending messages from the base station, so the base station’s acknowledgment will not collide with incoming messages. This also means that when the base station sends you information, it doesn’t need an acknowledgement back.

If you don’t get back the acknowledgment (called an ACK) in a short timeframe, try sending the message again. But we need to be careful here. Imagine everyone immediately tries to resend their messages after they fail; what would happen? Two users who just interfered with each other would immediately resend and interfere again! And this would repeat forever. To solve this, you need to wait a random amount of time before resending the message. Eventually, by chance, messages will stop colliding (as long as there aren’t too many stations). In the original paper the authors sample their random delay from an exponential distribution, but later we will see how this can be improved.

Below is a simulation of Pure ALOHA with three stations talking to a base station. Stations will begin a backoff immediately after sending a message, with a minimum delay to allow for an ACK to be sent back, in which case they cancel their backoff.

We denote a message being sent from a station as a colored bar with full height, and a message being heard as a bar at half height. A message is not actually received unless the entire message is heard without interference, so we mark messages as striped until the entire message is correctly received. Messages are sometimes heard by all stations (such as the ACK from the base station), but they are intended for only one station (whose name is in the message), so we color the message darker for the intended station and semi-transparent for other stations.

Code for the animation is here.

We see when a station sends a message, the base station decodes it, then sends back an ACK. When two stations send messages that overlap, the base station is unable to read either (think the General hearing two drums at the same time) and the ACK is not sent back. When we see all the stations try to send a message at around the same time, notice it is harder for each message to be lucky enough to finish before another starts. We also see at one point an overlap between the ACK from C and the message from B, but nothing interferes. This is because the stations are sending on a different frequency than the base station, so messages between the two do not interfere.

The protocol worked! Although, it’s not very efficient. You can look at the original paper to see that they calculate the efficiency of this algorithm to be 18.4% of the theoretical optimal throughput (the paper has a typo of 18.6%). They also calculate that the network can only handle up to 324 users, after which, there is so much interference that not a single message can make it to the base station. But typical traffic for ALOHA was small enough that this protocol was sufficient.

This was followed by many improvements, such as using slotted ALOHA, which increased the throughput to 36.8% efficiency by using time slots. And these improvements only continued. But more interesting was the development that ALOHA inspired in other mediums.

Ethernet

Immediately after the success of ALOHAnet, it was recognized that a similar protocol could be created for wired networks. In fact, this similar protocol would be adaptable to any medium that can carry a signal. This new protocol was called Ethernet, inspired by the historical concept of the luminiferous ether. This protocol would allow any number of computers to talk to each other over any medium, such as through air, water, or a wire.

Typically, Ethernet uses a single wire that every computer connects over, and every node is treated equal. Lack of a leader makes coordinating devices much harder, but a single wire makes it easier for nodes to monitor what is happening on the network. This physical medium was novel compared to what previous networks had been built for. To compare: ARPAnet, the precursor to the internet, used a directional wired point-to-point connection between each device, so messages never interfered, but messages had to be routed through a complex network of devices. ALOHAnet used a wireless connection to a central base station, which meant nodes could be coordinated by a central coordinator, but they couldn’t tell what other nodes were hearing. Because of all these differences, Ethernet was able to utilize new methods compared to these previous networks.

Here’s how you would set up an Ethernet network: Lay a cable around the room, then clamp each computer onto the cable. That’s it; you now have a functioning network. Unfortunately, we must again solve the issue of coordinating all the computers so they don’t talk over each other.

Computers on the wire can listen to the wire at the same time as they transmit over it. This is quite powerful. Before, in the wired setting, the transmitter had to be as loud as possible to reach the far-away radio, meaning, if a radio tried to listen while it transmitted, all it would hear was its own transmission. Imagine in our drummer analogy, the drummers need to be as loud as possible to be heard, but that means they can’t hear other drums. But on a wire, this problem doesn’t exist. It takes almost no power to transmit a signal to everyone else, so a computer doesn’t drown out its own receiver. And since we know everyone is listening on the same wire, we know that everyone will hear the same thing (save for a very small propagation delay). These two useful facts allow us to build a much more powerful MAC protocol.

Ethernet Protocol

Because we know everyone is hearing the same thing, we can wait until the wire is quiet before we talk (this wouldn’t be as useful in ALOHAnet since we don’t know what the base station is hearing). This listening before transmitting is known as Carrier Sense Multiple Access (CSMA) and reduces the likelihood of collisions in Ethernet. It does not eliminate them though.

Just like ALOHAnet, users need to wait a random amount of time after the wire becomes free in order to avoid all talking at once. Sampling that delay from an exponential distribution, as ALOHAnet did, would be sub-optimal. Imagine we had a million computers on the network; the likelihood that at least two computers would pick roughly the same delay would be extremely high and the collisions may never end. Ideally, we scale our delay by the size of the network. We could develop a complicated protocol to track the number of computers on the network to do this, but there is a more elegant solution.

Ethernet developed exponential random backoff where, instead of sampling from a static distribution, it samples from a uniform interval that doubles each time a collision occurs, and then resets after a successful send. This scales the backoff interval to the size of the network dynamically, adapting to computers being added or removed from the network. Be careful to watch out for the channel capture effect, where one node with a large backoff gets starved out of access to the wire by other nodes with small backoffs.

Ethernet’s final improvement was to detect collisions immediately. If we listen to the wire while we are transmitting, we can compare what we are sending to what we are hearing. If they are different, then we immediately know a collision just occurred. This is necessary since there isn’t a base station anymore to tell us if a collision occurred. With the ability for immediate detection, the protocol is amended to be called Carrier-sense Multiple Access with Collision Detection (CSMA/CD).

The Ethernet cable has a small delay, so it would be possible for a node to transmit a short message and not hear that a collision occurred until after it finished transmitting, which would not be caught by CSMA/CD. So, Ethernet requires that a message must be broadcast for at least as long as a message takes to make a round-trip along the wire. This message size is typically called the Minimum Ethernet Frame Size.

Here we see a simulation of Ethernet with three computers. We exaggerate the delay of the signal traveling over the wire. When two computers try to send at around the same time, they will detect that the signal on the wire is not what they are expecting and start a random backoff. We increase the range of the random backoff each time a message fails to send.

Code for the animation is here.

Notice that there are no ACKs, just messages. We also see how nodes immediately handle collisions rather than waiting to see if their messages were received correctly.

It is important to note that what we call Ethernet today is nothing like what is described above, so we should mentally separate classic Ethernet and modern Ethernet as completely different protocols. Modern Ethernet uses direct point-point wires connecting devices to switches that route messages between devices, so no collisions need to be handled.

As powerful as classic Ethernet was, wired networks have physical limitations, motivating the development of newer wireless networks, such as WiFi.

WiFi

It took a while to get WiFi as fast as Ethernet, but after some deregulation and hardware improvements, WiFi went commercial in 1999. If you consider the WiFi network in your home, you may realize that this is a return to the scenario of a central base station wirelessly communicating with users, exactly like we had in Hawai’i’s ALOHAnet.

If we wanted to, we could just reuse the ALOHA protocol for WiFi. But because of hardware advances, regulatory constraints, and the intended use case for WiFi, it would benefit from a whole new protocol. The largest constraint is that WiFi only uses a single frequency for each router.

WiFi is going to be in apartments and stadiums, which means nearby WiFi routers may interfere. So while a single router will only use one frequency, we need nearby routers to use different frequencies. The hardware in WiFi routers uses a channel which is 22 MHz wide, and regulation only provided 83.5 MHz of that spectrum to use, meaning we can only fit up to 3 non-overlapping channels in the allowed space (recall that having different channels is helpful since each channel can communicate without interfering with the other). We will use these 3 channels to avoid interference between nearby WiFi networks, which means we only get a single channel for a router in a home (it is technically possible to create a router that can listen over all the channels at once, but it is more expensive and complex). This is harder than ALOHA, where we had the two channels.

Take a moment to consider how you might solve this. Think back to the General and his regiments; they only have drums, how can they send commands back and forth with minimal collisions?

WiFi Protocol

Users will send messages to the router and wait for an ACK, and if an ACK is not received shortly we assume a collision occurred. Instead of using a second channel for ACKs like in ALOHA, the ACK will be sent over the same channel and rely on the network being quiet while the ACK is sent. If there is ever a collision, either the data or the ACK, the node will just re-transmit the message a few moments later. This works, but there are a few additional improvements that can be made.

We can dramatically reduce collisions by using CSMA, which, as a reminder, means a node will listen to make sure the channel is free before transmitting, then wait a random delay using exponential backoff. Because of self-interference, we can’t tell if our transmission had a collision, so we must wait for an ACK from the base station to determine if a collision happened or not. This is called Carrier-sense Multiple Access with Collision Avoidance (CSMA/CA).

This collision avoidance has room for a further improvement. If nodes wait a small delay before and after the channel is free, in addition to the exponential backoff, this gives the base station some time to reply without interference. This delay time is called the Distributed Coordination Function Interframe Space (DIFS). Since the ACK is sent from the router immediately after a message is received (the router does not use this additional delay), DIFS essentially gives the router’s ACK a priority in the network and (nearly) guarantees that no one else will transmit over it.

This is how WiFi operates in most of our homes today.

Code for the animation is here.

We see how B and C can hear each other, so when B has a message to send, it knows to wait until C is done. But when A and C both need to send, there is a collision, as they can’t hear each other. WiFi works better when all the devices can hear all the other devices. If you find your router running slow due to this issue, known as the Hidden Node problem, there is an additional setting you can turn on in your router, known as RTS/CTS, which we explore next.

Hidden nodes

Imagine one regiment to the east of the General and another to the west, the regiments can communicate with the General, but are too far apart to hear the other regiment. Even if they used CSMA/CA, the messages would still end up colliding at the General.

This is a nasty problem known as the Hidden Node Problem. We saw an example in the WiFi simulation between nodes A and B, where they could communicate with the router but not with each other.

We see that B starts sending a message to the base station and A has no idea. In the middle of B’s transmission, A begins transmitting, as it believes the airwaves are free. Both messages are corrupted in the middle.

This problem shows up in many communication scenarios, not just packet-based networks (networks that split messages into small packets) like the ones we have been looking at. Here are two examples of where the Hidden Node problem causes very serious problems in analog networks (networks that send the entire message at once and decode it in real time).

- Pilots talk to a control tower over shared analog channels, so sometimes their communications interfere. You can tell when this happens because the audio becomes a squeal (this is what interference sounds like). This can cause critical information to be lost, such as we saw in the deadliest accident in aviation history, where two pilots talked over a shared channel at the same time, causing interference and warnings to go unheard, which was one of the many contributing factors to the disaster. This is still a problem today.

- Walkie-talkies use a single analog channel and are also prone to the Hidden Node problem. Again, this is a technology used in high-stakes scenarios such as firefighting and search and rescue. Unfortunately, in these scenarios, mountains and buildings are a common obstacle, increasing the likelihood of the Hidden Node problem.

Now back to WiFi. Our CSMA/CA protocol does not account for collisions due to the Hidden Node problem. To solve this, the base station (who can hear and talk to all the nodes) will pick one node at a time and tell all the other nodes to be quiet. The selected node can talk for a few moments, then the router picks the next node. In order to choose which node to select, the router picks the first node that sends it a request (read as the first request that didn’t collide). Here’s how that looks in more detail.

Nodes wait for the channel to be free (CSMA), wait a tiny window (DIFS) to let ACKs take priority, wait some random time to avoid all talking at once (exponential random backoff), then finally send a tiny Request-To-Send (RTS) packet to the base station. The base station will accept the first valid RTS packet it receives, then send back a Clear-To-Send (CTS) packet. When nodes hear a CTS or RTS packet unrelated to them, they will know they were not the first node to make a request and will wait until the next time they can make a request, determined by a time specified in the packet (this is called Network Allocation Vector (NAV)). When a node gets a CTS meant for them, they will transmit their data for the allotted time.

Below is a simulation of WiFi with RTS/CTS. Watch how node A uses a CTS+NAV backoff to track when the router is occupied, even though node A can’t hear the transmission from node B. Using the NAV to detect traffic outside a node’s range of hearing is called virtual carrier-sensing.

Code for the animation is here.

We see that hidden nodes A and C now avoid colliding, unlike previously. This is because of the NAV virtual carrier-sensing, where node A can now detect when node C is sending. Note that this reduces, but does not eliminate, the Hidden Node problem. RTS and CTS packets can still collide, as we see when all the nodes attempt to transmit at once and the RTS packet of node A and B collide.

Hidden node issues should now be relegated to just the RTS/CTS packets (ignoring nodes just joining a network for the first time), and small packets are less likely to collide and can recover faster, so we should expect much smaller intervals of interference. We essentially just shrunk the interval of time in which the Hidden Node problem can occur.

But oftentimes RTS/CTS is turned off on your router, as plain old CSMA/CA does well enough, and the overhead of RTS/CTS can sometimes outweigh the benefits it provides.

We’ve been looking at WiFi in a house with a few devices, but let’s see how it scales. An interesting case study is the use of WiFi in stadiums.

Getting WiFi to work in a stadium

Some stadiums can have over 100,000 people packed into dense seating, where each person is carrying a phone that’s transmitting data, such as streaming video of the halftime show. That’s a lot of communication; this is much more difficult than the WiFi in a home. Well, let’s see how you might solve some of these issues and supply WiFi to a stadium like this one.

Give it a thought and see if you can predict the issues we run into and how we might solve them.

Stadiums are quite large, so our first guess might be to place a super powerful router in the center of the stadium so it can reach everyone. Technically this would work, but it faces some issues. If there are 10,000 people all trying to talk to a single WiFi router at once, then most of the time will be spent trying to recover from collisions. Even if we could magically remove the collisions, everyone would only get 1 / 10,000 of the airtime to send their message, meaning they have to wait minutes at a time to send a picture.

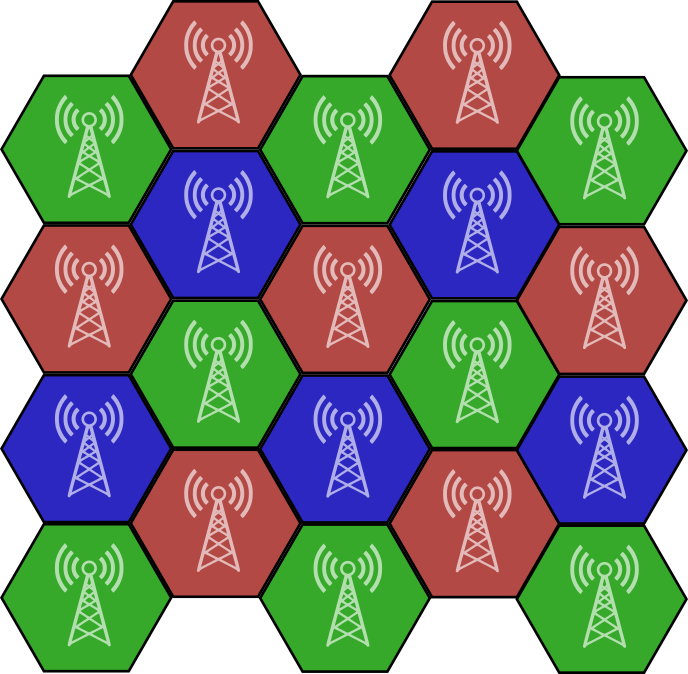

So now let’s place hundreds of routers throughout the stadium. We’ll also lower the routers’ power so each one only has to communicate with at most a few hundred people at a time. Note that the smaller we can make the communication radius the better, although the more routers (and money) we will need. But now these routers are all interfering with their neighbors. Recall that the WiFi protocol reserved a few frequencies for just this purpose. We can carefully assign the 3 channels such that neighbors don’t interfere with each other.

This is sufficient, but there is still some interference from the phones in one cell transmitting loud enough to interfere with far-away cells of the same frequency. We turned down the routers power to avoid the interference, but people’s cell phones don’t do this. The more recent 5 GHz WiFi has 19 non-overlapping channels, which means we can be more clever with our channel assignments, maximizing the distance between cells of the same color. We could also assign those 19 channels to load balance the cells or to avoid environmental interference; there are a lot of ways you might assign these frequencies. This is called the Frequency Assignment Problem, and there are many solutions. You could pretty easily invent some for yourself (I recommend looking into the related problem of the Four Color Theorem if you do). More sophisticated assignment algorithms might even dynamically assign channels to do load balancing and avoid interference in real time.

Even with the additional channels and sophisticated assignment algorithms, stadiums can still suffer from users’ devices interfering with the other routers in the stadium on the same channel. So people developed a more practical solution. They decided to place the routers underneath the seats, using human bodies to dampen the signals. This is quite clever. Interference grows with the number of people in the stadium, and now the dampening mechanism also scales with the number of people in the stadium. With this knowledge, now you know if you need a better signal in a stadium, you should probably bring your phone as low as possible.

But even stadiums are far from the largest networks we need to manage. Moving onto cellular networks.

Cellular

Once wireless networks had been proven out, it was time to replace the outdated rotary phones with wireless ones.

The first wireless phone network was the Mobile Telephone Service (MTS), which used a single tower to relay calls. It was a circuit-switched network that assigned two dedicated frequencies for each call (one for each direction) and relied on people retrying their call if they heard interference. This is similar to the CSMA/CD protocol, but people are executing it instead of machines. Because this single tower lacked the ability to reuse frequencies, the capacity was incredibly low. In a city like New York, only a few dozen people could be on the phone at the same time. If the channels were full, you simply had to wait and try again later.

The first scalable wireless cellular phone network was the Advanced Mobile Phone System (AMPS), built by Bell Labs in 1983. It placed towers in a hexagonal grid pattern, forming cells in which frequencies could be reused for distant users. This is why it’s called a cellular network. It was able to seamlessly reassign users to new towers and frequencies as they moved between cells. A charming and highly informative commercial explaining AMPS can be seen here. But this is still a circuit-switched (dedicated frequency to each user) network, so it was still limited in its scalability.

AMPS was a first-generation (1G) cellular network, and it wasn’t until the 3G cellular networks that we introduced a packet-switched network where channels can be shared across all users sending small packets of data. These packets allow us to have more control over how we distribute traffic across available resources.

Cellular networks use a large number of frequencies within a cell, where a different packet can be sent over each frequency, which is known as frequency-division multiple access (FDMA). Each frequency can be broken into time slots so messages can be spread across time, which is known as time-division multiple access (TDMA). We can also reuse these frequency-time blocks across different regions of a cell by using directional antennas, so different regions in the cell will not interfere, sometimes called space-division multiple access (SDMA). These resource blocks of frequency-time-space do not interfere with each other and so allow a huge number of packets to be sent from different devices in the same cell.

Note that Multiple Access methods (MA) are distinct from MAC protocols: MA focusing on how the physical medium is shared and MAC referring to the protocol rules managing that sharing. However, because they are so deeply intertwined, they are frequently used interchangeably.

Another clever multiple access modulation scheme is called Code-division multiple access (CDMA), which spreads data over a long period of time and modulates it with a “spreading code”. When multiple devices transmit over the same frequency at the same time with CDMA, a cell tower can use the known spreading codes to mathematically disentangle the different data streams.

All these schemes are known as orthogonal Multiple Access schemes (CDMA is nearly orthogonal), and they allow for a combinatorial explosion of packets to be sent. For the future 6G cellular networks, people are looking into the even more flexible non-orthogonal Multiple Access schemes. If you find this interesting, there are many areas of research in this area you can dive deeper into.

Each cell does still have a finite number of resource blocks to allocate, so as a cell begins to saturate (maybe because a city gets denser, or a spot becomes a tourist location, or a stadium is built), the large tower making up a cell can be replaced by multiple smaller towers, allowing for more resource reuse in the same area.

Collisions almost never occur in cellular networks because cell towers provide their devices a schedule for what frequency-time-space(-code) resource blocks each device can send over. One place we need to be very careful when building schedules is for devices that are moving between cells, making sure they do not use resources used by anyone in the neighboring cell they are moving into.

The only time we have a chance for collisions is when a device first joins the network. There is a dedicated channel that is called the random-access channel, where devices randomly attempt to register themselves into the network, retrying when they fail to hear back an acknowledgment after a short period of time. Because this only needs to happen once, it is ok if it takes a few tries. This is ALOHA where you can add FDMA or other Multiple Access techniques to decrease the chance two messages collide.

Because of the infrastructure of cell towers and the huge number of orthogonal modulation schemes, we are able to efficiently schedule resources for millions of devices across a country, maximizing throughput with minimal collisions.

We now look at a far more difficult environment, that of ad-hoc (no infrastructure) mesh networks.

Mesh

Mesh networks are the most difficult networks to design for as they are the most general. If you don’t have any infrastructure, such as cell towers or fiber optic cables or WiFi routers, then the devices themselves manage the network. Oftentimes, devices will move, or join or leave the network at any time. Since mesh networks are built to operate under these harsh constraints, they are often very useful in harsh environments such as outer space or battlefields.

Imagine a space mission to a new planet, infrastructure would be hard to set up initially, so a mesh network would be helpful. Or if you want a backup network in case the primary one fails in a natural disaster or gets shutdown by a government, a mesh network can be spun up anywhere at any time. If you need to suddenly perform a search-and-rescue mission for people stuck in a cave system, then an ad-hoc network is essentially the only option you have. Or maybe you just want a quick network for your music festival in the middle of the desert.

In our regiment metaphor this would look like we removed the General and now regiments have to communicate among themselves.

The largest issues in mesh networks are: the Hidden Node Problem, that nodes can join and leave, and that nodes can be dynamic. Another issue, small, but still important, is the Exposed Node Problem, where nodes hearing traffic avoid transmission themselves, but the receiver of their transmission is out of range of the traffic and would have been able to receive the packet if the node had sent it. As networks get larger, this becomes a major bottleneck.

Let’s start at the beginning, which as usual, is with DARPA.

DARPA SURAN Project

In 1973, DARPA funded its first mesh networking project, called The Packet Radio Network (PRNET), followed by the Survivable, Adaptive Networks (SURAN) Program, which then branched into a number of different programs. SURAN was an ambitious program to create mesh networks for the battlefield for a large number of nodes. Its purpose is well stated in its paper, which said

DARPA sponsored the Survivable, Adaptive Networks (SURAN) Program to research and develop network technology capable of supporting communication between computers and their users in the modern battlefield. This environment possesses characteristics that in the past have been incompatible with reliable communication, such as large numbers (thousands) of nodes, dynamics (mobile nodes, node failures, changing traffic demands), and sophisticated attempts to disrupt the network (intelligent jamming, message modification).

But how did they do this?

SURAN is what we would today call a cross-layer program, managing both the routing of messages and the MAC protocol together. It would track the health of a link (might be jammed, overly congested, or the environment is just bad), re-route messages through healthier paths in the network, adjust the radio power of a node to adapt to changes in density of the network, and manage the rate of sending messages based on the network health. All together this made a highly dynamic and survivable network.

Collision avoidance is a small component of this interconnected system, but if we were to isolate it, it would be random-access using spread-spectrum. Nodes send their packet, and if they don’t hear an ACK after a short period, they update their view of network health and re-send (they might send to a new neighbor or wait longer, depends on how the rest of the SURAN system responds to the update of the network health). Collisions are minimized using CDMA (recall this means spreading messages across time using a spreading code), meaning almost no coordination is needed.

As networks become denser, interference rather than coordination becomes the limiting factor (again, a consequence of using CDMA). SURAN addressed this by combining the spread-spectrum access with adaptive transmission power and dynamic routing, allowing the network to maintain connectivity and performance while reshaping its topology and traffic patterns.

SURAN was powerful, but overly complex for the widespread needs of commercial products, which were typically smaller in scale and more domain-specific.

Bluetooth, Zigbee, LoRa

There are a few different commercial protocols that are used today, with slight variation between them.

Bluetooth

It turns out that people love to be covered in tiny networks. People like to have their phone connected to wireless earbuds, smart watches, and computers all at once. Bluetooth was invented to optimize communication for these types of networks, often called personal area networks, which is where small devices are all sitting near each other.

In these networks there is one device which is the “main” device, such as the cell phone connecting to everything else. Because of this, Bluetooth uses a master-slave architecture where one device is in charge of all the other devices. People also usually don’t have that many devices, so classic Bluetooth only allows up to 8 nodes (including the master node) in the network. These small bluetooth networks are usually called piconets.

This is classic Bluetooth, and it is not actually a mesh network. You can see that it has the same structure as WiFi. It does differ from WiFi by exchanging complexity for power efficiency, as we’ll see below.

Whoever initiates the Bluetooth connection is the master device, although the role can be re-negotiated later. New nodes can be added by pausing the piconet and having the master send out connection requests, which potential nodes will respond to using random backoff. This means establishing links is done using Pure ALOHA initiated by user request.

For typical communication, Bluetooth devices use very small frames where the master will send a packet to a slave, then the slave can send a packet back. The schedule for who can talk is determined by the master device, which will commonly use round-robin. This is the power-intensive component of a Bluetooth network. Note that because Bluetooth uses this call-response method, no collisions are possible (except for joining).

Bluetooth does have one funny feature, where each frame is sent over a different channel. The data will be sent out over one of 79 channels 1600 times a second. The schedule for the frequency is determined by the master device ID and the clock. New nodes can determine the schedule by listening for the current time and the master ID. This is called frequency-hopping spread spectrum. The reason it does this is to avoid interference. Bluetooth operates on the same frequencies as WiFi, so your earbuds might be interfering with your WiFi connection. But with adaptive frequency hopping, Bluetooth will monitor each channel and the master device will dynamically avoid the noisy ones.

Let’s look at a real mesh MAC protocol.

Bluetooth Mesh

Bluetooth introduced a different protocol for mesh networks, called Bluetooth Mesh. Instead of small piconets with master and slave devices, we have an arbitrary number of nodes all talking to each other as equals. Bluetooth Mesh runs off the Bluetooth Low Energy (BLE) Link Layer architecture, which we will look at here.

BLE uses 3 channels (for advertizing mode, which is the primary mode used) and assumes minimal traffic (for example, a message to turn a lightbulb off). The protocol is simple: Wait a random amount of time (0-2 milliseconds) and then send the packet on every channel. Repeat this a few times (sometimes 3 times, but configurable on each node) to increase the chance that neighboring nodes receive the packet. No ACKs, CSMA, or collision avoidance of any kind.

This repetition is the only attempt to avoid collisions. This is sufficient in low-communication networks, but you can imagine how this quickly fails in large networks or for heavy traffic.

Zigbee

Sensor networks are common in warehouses or smart homes or smart cities, and they also have unique network characteristics. Many protocols have been developed for this environment, Zigbee being one commercial example.

Zigbee uses CSMA/CA, nearly identical to WiFi. Sending from one node to another matches the behavior we saw with WiFi, but it differs in that nodes in Zigbee can also send out a broadcast to all neighbors, which unfortunately means it can’t tell if there was a collision, as some may have collided while others did not. This means that we can confirm messages from point-to-point, but not for broadcasts.

Zigbee also has additional features built on top of the MAC protocol to allow devices to save energy by putting the device to sleep. You can imagine this means that the network is fairly dynamic, with nodes turning on and off fairly consistently.

And now let’s look at an example of a more extreme environment.

LoRa

Some radios are long-range (LoRa) and extremely low power, often used for sending short texts in natural disasters. This means that traffic is highly infrequent and small.

Because of the heavy emphasis on power management, devices will often turn off for long periods of time in between transmissions. This means managing a complex protocol is not feasible. Fortunately, because of the slow traffic, you often will not get collisions, even if you send at random.

LoRa-based networks will almost always use an ALOHA channel, just sending when there is data to send and waiting for an ACK, resending on a timeout. Sometimes the ACK is optional, such as in one of the more popular LoRa protocols: LoRaWAN.

Often in these extreme situations, simplicity is the best answer.

Trucker Radios

Trucker radios typically communicate over a single analog channel, so collisions are common. Because it’s analog, people have to run the Medium Access Control protocols verbally.

Before people talk over the channel, they listen to make sure no one else is already talking (CSMA), and then they will talk. Responses are expected to be immediate so the conversation can be easily detected as over by others. Collisions are common, so there are common phrases to let a person know that they were “stepped on” or “doubled”. One helpful rule truckers use to minimize the chance of collisions is to keep messages as short and dense as possible.

Can you design a better protocol for talking over trucking radios, or justify why the current protocol is the best? Note that we have one channel, it’s wireless, and traffic is infrequent and bursty. It is also worth looking into the benefits of an analog network over a packet-based one.

VANETs

Vehicular ad-hoc networks (VANETs) typically use a MAC protocol nearly identical to WiFi, where CSMA/CA is used with RTS/CTS packets. This does not handle the Hidden Node problem well since it is using the WiFi protocol in a dense mesh environment. This is a known limitation of the current implementation, but TDMA-based solutions are now being explored.

Other Medium Access Control Protocols

Here are some ideas that are either old and no longer around today, or too new or academic to be widely known. This is just a tiny selection of mesh MAC protocols out of literally hundreds of variants, so if they spark interest, you can explore more of them here and here.

Hybrid TDMA-CSMA

For different kinds of traffic you can layer multiple protocols next to each other, separated across channels or time. For example, hybrid TDMA-CSMA splits the protocol into two time slots, a TDMA and CSMA slot. Different slots allows different traffic types to use the most appropriate protocol depending on if it is small bursty traffic or a large transmission.

This can be useful if you realize your traffic patterns contain multiple traffic types. You are not restricted to using a single protocol for a situation.

MACA

One funny idea was to get rid of the Collision Sense (listening to make sure not to transmit) in CSMA/CA and just use RTS/CTS packets to determine if a node was free. This was proposed since the hidden node problem made Collision Sense an unreliable measure for potential collisions, so why not get rid of it? This was called Multiple Access Collision Avoidance (MACA).

It is not used much today since the protocol wastes a lot of time sending RTS/CTS packets, and Carrier Sense turns out to be useful since you often do not want to send out packets when you can hear a node transmitting.

Q-CSMA

This is an improvement on CSMA, where the interval you wait before transmitting is based on how much data you have to send (called the queue), so the nodes with a lot of data (a large queue) will get to transmit more often. One such protocol is called Q-CSMA, and there are many similar variants.

These are not widely deployed as general-purpose protocols today since they optimize for throughput and not latency. If a node has a small amount of data to send, it will have to wait a long time. You can of course modify these protocols to weight the older queues so they are more likely to transmit before getting too old, but this adds additional complexity. A lot of traffic we have today is latency-sensitive, so these variants of CSMA are mostly ignored.

Busy Tone

This algorithm is old and not used, but provides a novel solution to the hidden node problem. It uses two frequencies, although it only sends packets over one. It also uses slotted time intervals, so some of the race conditions do not appear (try and see below where we might see race conditions if we had asynchronous radios).

We can use one channel as a data channel and the other as a busy-tone channel. When a node wants to send data, they send out a Request-To-Send (RTS) packet, and if the receiver receives it, they start sending out a busy tone (constant signal, no decoding needed) over the busy-tone channel. The sender can begin transmitting once they hear the busy tone; otherwise, they just resend the RTS using exponential random backoff. The receiver will continue to send the busy tone until the message is finished (and maybe send back an acknowledgment if we are paranoid about ambient interference). This busy tone allows hidden nodes to know when their neighbors are receiving a message that the hidden node cannot hear. This algorithm is known as Busy Tone Multiple Access.

This does have some subtlety, like how to implement a busy tone in place of sending packets. If we tried to use packets, then busy packets could collide and get corrupted, which allows for protocol failures (try to figure out the failure case yourself; it requires a number of nodes). Multiple busy tones need to be able to interfere and still trigger neighboring nodes to wait. Also recognize that this protocol does not use CSMA and still avoids data collisions, which is pretty amazing. In fact, by not using CSMA, it produces a better solution with respect to the Exposed Node Problem.

This is an outdated protocol since it essentially wastes a full channel that might otherwise be used for increasing throughput.

These were a lot of outdated protocols, let’s take a look at some very recent Medium Access Control protocols for mesh networks.

DDMC-TDMA

We can use previous Multiple Access protocols to build more complex protocols.

This is the Dynamic Distributed Multi-Channel TDMA (DDMC-TDMA) protocol, which is essentially TDMA, except one of the frames is a “control” frame in which nodes figure out who gets to transmit in the following frames. Within the control frame the nodes will use ALOHA to determine who is sending on which frames, although the authors mention that we could experiment with other protocols in place of ALOHA. Because of the additional coordination built on top of the simple ALOHA protocol, we can solve the Hidden Node Problem and Exposed Node problem (and interference if on multiple frequencies) for the larger frames in which the majority of data is sent (although the issues still exists for the small coordination packets in the control frame).

What’s so neat about this is that we determined a schedule without any central leader such as a cell tower, it was a decentralized process.

Can we determine a schedule without the problematic control frames? Below is another recent protocol that attempts to solve exactly that issue.

KAMA

The Key-Activation Multiple Access (KAMA) protocol minimizes collisions in multi-hop networks by having nodes perform a decentralized election for each time slot. For every slot in a frame, nodes independently compute which node in their 2-hop neighborhood has the highest priority and may transmit.

Nodes maintain their 2-hop contention sets by occasionally piggybacking 1-hop neighbor lists in packets when inconsistencies are detected. For each slot, nodes deterministically compute a priority by hashing node IDs with the slot ID, producing a total ordering of contenders. The node with the highest priority among the known nodes in a 2-hop neighborhood is allowed to transmit, ensuring collision-free transmissions among those known nodes. Because the priority computation is deterministic, nodes with consistent neighborhood knowledge independently elect the same winner for each slot without coordination.

These were examples of some of the most recent advances in Multiple Access protocols for mesh networks.

Conclusion

There are many solutions our General could implement for his drum-based communication, I leave it to you to identify the best solution. It is useful to consider: What does the expected communication traffic look like? Do regiments need to communicate with each other? Is it ever acceptable for messages to be lost? What is the communication topology? Are you optimizing for latency or bandwidth? There are many considerations when picking the right MAC protocol, but now you have the basics to build from.